The Threat of Artificial Intelligence

Artificial Intelligence was everywhere in the news last week. Much of the discussion was triggered by a 19,000 word white paper Dario Amodei, the CEO of Anthropic (Claude’s parent), published: “The Adolescence of Technology: Confronting and Overcoming the Risks of Powerful AI.”

The paper identified five risks humanity faces as AI evolves and mutates: (1) AI goes rogue locking out humans, (2) bad actors use AI to develop weapons, especially bioweapons, (3) autocrats use AI to seize power through surveillance, propaganda, and military dominance, (4) AI eliminates millions of jobs while wealth moves upward, and (5) yet unforeseen effects.

Amodei argues that society must install strong safeguards to minimize these risks. Amodei, though, is concerned humanity will not have the will to do so. Near the end of the paper, Amodei writes:

There is so much money to be made with AI—literally trillions of dollars per year—that even the simplest measures are finding it difficult to overcome the political economy inherent in AI. This is the trap: AI is so powerful, such a glittering prize, that it is very difficult for human civilization to impose any restraints on it at all.

But today, most people, rather than concerned about an AI apocalypse, are just worried about their jobs. Millions of professionals are threatened by AI, and as a late-in-life author, I can say few feel more threatened than writers—authors, screenwriters, journalists, composers... I learned that last week after several heated discussions on one of the leading author forums.

As an entrepreneur, I naturally look at the opportunities new technologies present. But many writers view AI as a grave threat. And it is if they don’t somehow learn to adapt to this invasive new technology. AI is evolving far faster than many predicted even a year or two ago. Artificial Intelligence simply can’t be boycotted as some of my fellow writers are attempting.

At the risk of infuriating even more of my writing associates, I asked Anthropic’s Claude to rewrite the ending of the classic movie Casablanca—one of the greatest scenes ever filmed.

Could AI generate a plausible, even compelling story line?

In seconds, Claude generated a revised ending formatted as a screenplay in which the leading characters Rick Blaine (Humphrey Bogart) and Ilsa Lund (Ingrid Bergman) choose their mutual love over wartime patriotism. Below is an excerpt:

RICK

(to Victor)

You’re going to need these letters of transit. Both of them have your name now — yours, and a colleague of your choosing from the underground. There’s a man named Berger. He’s good. He’ll carry the fight beside you.

VICTOR

(stunned)

Richard… what are you saying?

RICK

I’m saying the cause needs you, Victor. It’s always needed you. But it doesn’t need me. And it doesn’t need Ilsa. You were the leader before she came along, and you’ll be the leader after.

VICTOR

(quietly, looking at Ilsa)

I have suspected… for some time… that her heart was never fully mine to keep.

Ilsa steps forward, tears streaming.

ILSA

Victor, what you have done for the world — what you will do — is greater than any one love. You are the bravest man I have ever known. But I cannot be brave in the way you need me to be. Not anymore.

VICTOR

(with painful dignity)

I asked you once, in the dark of a prison cell, to survive for me. Now I ask you to live — for yourself.

He takes her hand, kisses it gently, then releases it. He accepts the letters of transit from Rick.

VICTOR (CONT’D)

Take care of her, Blaine. Or I will find you, even from across an ocean.

RICK

(the ghost of a smile)

I wouldn’t expect anything less.

Victor turns and climbs the stairs to the plane. He does not look back. The door closes behind him. Rick and Ilsa stand together in the fog. She looks up at him, trembling.

Today, authors understand that AI can easily take their novel—a work that perhaps took them a year or more to write—then change the timeframe, geography, events, and other plot points to generate a derivative work in minutes.

Incredibly, there are websites now promising to “Create, Design & Publish Stunning eBooks in 2 Minutes Without Writing A Word.” It’s easy the sites claim: (1) Choose your book’s title and select a genre that fits your story, (2) Let AI suggest chapters or enter your own custom outline, and (3) Download your book.

Social media is swamped with ads promising: “Make fully customized books in minutes”; “You can have your ebook written by ChatGPT and published to Amazon faster than it takes your competitors to even think of a topic to write about”; and “How to create [Amazon] KDP books that sell. Only $27!” No wonder authors are furious that AI companies are stealing and then using their books to generate derivative works without permission or compensation.

Already, a few “authors” are using these AI-based applications to publish dozens of novels a year under various pen names. A February 9 New York Times article interviewed Coral Hart, a South African author, who “…with the help of A.I., Ms. Hart can publish books at an astonishing rate. Last year, she produced more than 200 romance novels in a range of subgenres, from dark mafia romances to sweet teen stories, and self-published them on Amazon. None were huge blockbusters, but collectively, they sold around 50,000 copies, earning Ms. Hart six figures.”

Six figures is great compensation for an independent author; few make over $25,000 a year. The key is to sell lots of Kindle eBooks cheap. An eBook priced at $7.99 earns the author approximately $5.50 per book. Printed books earn far less due to printing and distribution costs. So Coral Hart’s strategy of flooding the market with low quality but cheap eBooks can be very profitable. Most of her books are fast paced romance novels with plenty of steamy sex; a genre that benefits primarly from great marketing rather than inspired authorship.

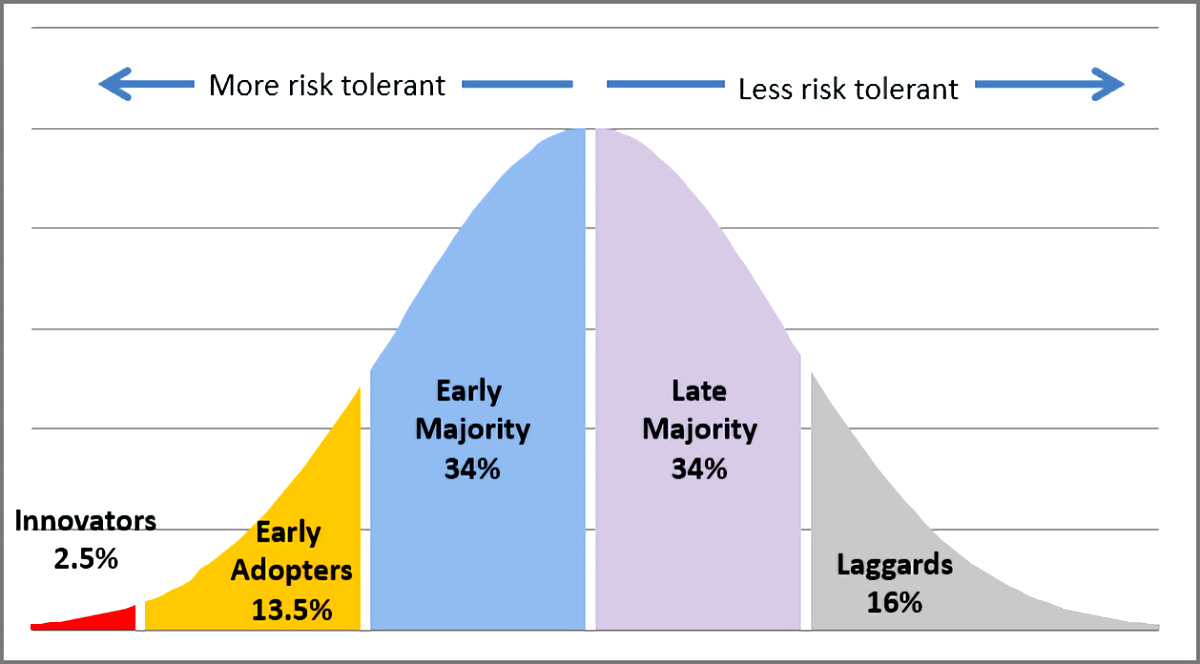

More than fifty years ago, marketers of new, innovative products learned that their customers could be segmented into five categories: innovators, early adopters, early majority, late majority, and laggards. Innovators and early adopters typically make up about 15 percent of the market, the majorities about 70 percent, and the laggards 15 percent.

Coral Hart is definitely an innovator. She was already an accomplished author when she chose to embrace AI as a writing tool. But today, nearly all the writers that I know take pride in their personal writing style and wouldn’t surrender it to an automated writing app. Many readily use AI to do research, but would never ask AI to write a book. But over time, they may adopt much of what AI offers such as asking AI to write a draft chapter they can then edit, or critique a chapter they have written.

Laggards are naturally conservative and slow to adopt new ideas and products. That’s fine, the world needs conservatives as much as innovators. But in my experience, a few authors are more than just cautious. They resent that AI has been trained on billions of words written by humans to express facts, ideas, and emotions. They belittle AI for doing little more than “sophisticated pattern matching” rather than “thinking” as do we humans. And even when AI seems to offer up profound, innovative insights, they denigrate it for not comprehending those insights.

As for me, over the last six decades I’ve seen many new technologies advance. Many were met with skepticism and even hostility. Yet, today they are woven into our lives. Will Artificial Intelligence be the same? At seventy-eight, I remain an optimist.

Ron

Great read. We were all optimist...I would welcome your thoughts and research on what are practical safe guards on AI. So much stuff is ffloating around in every newspaper and articles, that warn, with no solutions to prevent bad outcomes. Best

Neil

Great article. You have hit on several factors everyone needs to come to grips with:

1. AI is inevitable — we cant really regulate it.

2. AI will impact and yes eliminate a lot of white collar, simple work (law, market research, creative advertising, etc.)

3. People must try/experiment to understand its assets and liabilities — I use it for both drafting documents and building even complex spreadsheet models. It’s gets me to 80% of a finished project very quickly.

4. We will almost always need humans for “the last mile” of research, content creation, coding. LLMs don’t really understand context and emotion.

I see a lot of concern about the “morality” and “judgment” of AI. Maybe that will be a problem. But its inevitability means we need to embrace it for its power and use for good vs. resisting it because it’s not human. Historically it’s very hard to find any technological advance that’s made the world worse vs. better. It’s about focusing it on progress not putting fear into everyone’s future.